Camera_Map_Per_Pixel compositing

When building a 3D mesh based on photos with software like 123D Catch, PhotoScan, Photomodeler, the export to other 3D software includes the original positions and directions of the cameras used to make the photos. Based on the photos the software will generate a texture. As the scanned mesh are mostly triangles and the UV’s are simply flattend, they need to be ‘retopologized’. Meaning that in other 3D software the scanned mesh has to be overlayed with mesh that is cleaner and usable for animation, etc. Also the UV’s are redone to have only few seams.

Currently, after retoplogizing, the scanned texture is projected onto the new model and then that is it. Any holes in the scanned mesh, patched in the new model, can not be filled by projecting the old on the new. Also texture resolution is set by the scanned textures, and can’t be redone in 3D.

To overcome this one can use a Camera Map (per pixel) and reproject a photo onto the new model. If you do this for every camera in the scene, a new texture is formed 360 around.

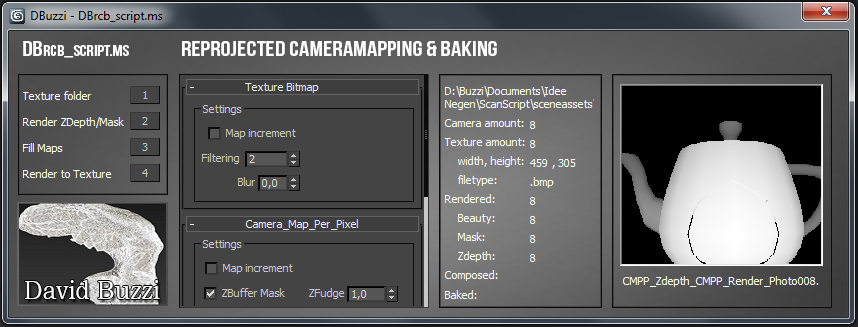

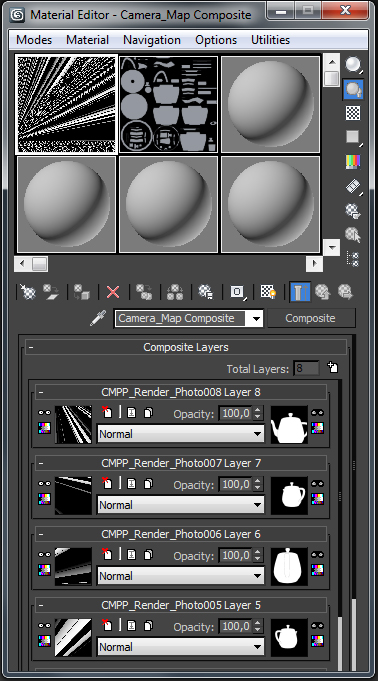

This script fills a Compositemap with as many Camera_Map_Per_Pixel maps as there are cameras and textures. Zdepth and Mask renders can be added for extra control and the result baked into a new Bitmaptexture. By finding the correct Angle Treshold and correcting the Masks, a new, high-definition map can be created.

For now it is a basic script and if there is any need for future features I hope to hear from you…

greetings,

David Buzzi

Comments

Great Idea

I have been using a similar approach to camera map an object with different cameras, but doing it one camera at the time and painting masks by hand to create the transitions between cameras... I will your script seems like it may make the process quicker, thanks for sharing!